We are an award winning product design consultancy, we design connected products and instruments for pioneering technology companies.

How to architect IoT sensor networks for digital twins

Reading time 15 mins

Key Points

- The Maturity Spectrum: Connected products span from telemetry to predictive analytics to full digital twins; not every product needs to reach the top, but your architecture should leave the option open if needed.

- Physics-First Data: Twin-ready IoT sensor networks prioritise meaningful features, high-fidelity measurements, and temporal alignment over sheer data volume to ensure models reflect real-world behaviour.

- Strategic Partitioning: Balancing edge autonomy for real-time resilience with cloud depth for fleet-level context is critical, as it defines both the intelligence and survivability of the system.

Make your next connected device scalable and intelligent. Book a free consultation with Ignitec to align the architecture with your product vision and design an IoT sensor network that delivers predictive insights and digital twin capabilities.

Ben Mazur

Managing Director

I hope you enjoy reading this post.

If you would like us to develop your next product for you, click here

Designing connected products that can support digital twins

Digital Twins are so much more than a ‘visualisation’ technology that mirrors a physical asset in the real world – be it a smart car or human being. When designed properly, they become a continuous feedback loop between the physical and digital worlds – enabling simulation, prediction, and optimisation in near real time. For those working in the IoT space or developing connected products, the concept and application of a digital twin is particularly compelling.

At its core, IoT (the Internet of Things) is about connecting sensors (e.g., a wearable chest patch), gathering data (e.g., heart rate and body temperature), and deriving insights (e.g., early disease detection). IoT sensor networks for digital twins build on this foundation and add a layer of meaning – enabling us to move from reactive data (“what just happened?”) to insight (“what’s happening now?”) to foresight (“what’s likely to happen next?”). In some cases, we could even take this a step further and add scenario testing (“what if the wearer’s usage pattern changes?”).

For IoT, digital twins bridge basic data collection and intelligent action. They show customers and investors that the device is connected, adaptive, and continuously learning.

And while there are plenty of notable examples of digital twins deployed at a macro scale (e.g., industrial manufacturing and smart cities building digital models of their transport systems), this technology isn’t reserved for big-budget, large-scale deployments. Thanks to affordable IoT hardware, cloud infrastructure, and edge platforms, small businesses and startups can build and deploy digital twins of small systems or even prototypes of new devices.

But there’s a catch.

A digital twin is only as robust as the sensor network that feeds it.

For example, a small business designed and manufactured a connected product focused on connectivity and basic telemetry, only later realising they wanted predictive modelling or digital twin capabilities. At that point, however, many of the architectural decisions that determine whether it’s possible (e.g., sampling frequency, sensor placement, calibration processes, data schemas, edge filtering) had already been locked in. Meaning that their team must work within the architectural constraints already embedded in their product’s sensor network.

Designing IoT sensor networks that can support scalable digital twins requires thinking beyond connectivity. It involves making decisions about observability, fidelity, latency, data governance, and long-term evolution, even when still at the prototype stage.

This post explores how to architect IoT sensor networks that can genuinely support scalable digital twins — not just in enterprise environments, but in startups, small businesses, and growing product ecosystems. We’ll examine the design principles, architectural trade-offs, and hidden failure modes that teams encounter as they move from “let’s design a connected device” to “how do we build an intelligent system.”

From telemetry and predictive analytics to digital twins: Which is the best fit for your product?

Most connected products start with telemetry (the automated, remote collection of data). Sensors capture signals, devices transmit them, and dashboards display the results. Thresholds trigger alerts, giving users visibility into usage patterns, environmental conditions, or performance metrics.

This alone can be transformative, and for some products and monitoring devices (e.g., a fall detection system for elderly care), simply knowing what is happening in the present moment is enough. These products serve their purpose at the telemetry layer, and there’s no need to anticipate future states or model system behaviour, because their inherent value lies in the immediate response they’re designed to provide.

Predictive analytics comes into play when teams want to take it a step further. By analysing probabilistic patterns in historical telemetry data, product teams can detect trends, identify anomalies, or forecast likely outcomes. Instead of reacting to an event, they begin to anticipate it, thereby moving from “what just happened?” to “what’s likely to happen next?”

Environmental forecasting sensors are a clear example of this layer. They gather environmental telemetry (temperature, water flow, air quality, and other variables) and use historical patterns to predict near-future states. This predictive capability provides actionable insight without needing a full digital twin. For these products, trend-based forecasting alone meets the goal.

A digital twin goes another step further by focusing on trend synchronisation. Rather than only analysing patterns in past data, it attempts to represent the system itself: its components, states, and interactions in a structured digital form. Continuous sensor updates enable teams to simulate changes, test “what if” scenarios, and explore outcomes under different conditions. This enables closed-loop control: the ability to simulate a fix in the twin before automatically pushing a command back to the physical device to optimise its performance. A digital twin doesn’t just predict a trend; it understands the machine’s structural logic and enhances it.

Building a digital twin isn’t about adding a model or complexity just for the sake of it. It relies on engineering a sensor network that captures the right variables, with the appropriate resolution and spatial-temporal consistency (i.e., timing and context). While telemetry provides visibility, and predictive analytics enables foresight, IoT sensor networks for digital twins provide a structured understanding of the system and a level of insight that only emerges when the underlying architecture to support it has been built in.

For growing teams, this isn’t just theoretical. Decisions made early (sensor placement, sampling frequency, calibration routines, data formats, and firmware flexibility) will determine whether future predictive or twin capabilities are feasible. The goal isn’t to claim that every product must have a digital twin, but to ensure your initial architecture doesn’t accidentally lock the door on higher-order intelligence as the product evolves.

Core design principles for IoT sensor networks to enable digital twins

Building a digital twin-ready product isn’t just about adding more sensors. It’s about designing a sensor network that captures meaningful data reliably and consistently, and that scales. Early architectural choices have consequences and determine whether your data eventually fuels a high-fidelity simulation or merely fills a database with expensive noise.

Importantly, as previously stated, not every connected product needs to have a digital twin. But if you’re intending your product to evolve toward simulation or system-level modelling, the design decisions outlined below are foundational.

1. Capture High-Fidelity Features, Not Just “Big Data”

It’s tempting to measure everything, but volume is not a proxy for value. Whether you’re building physics-based models or machine learning systems, this becomes a question of feature engineering: identifying the specific signals that define your system’s ‘ground truth’.

- Avoid Overfitting: Collecting irrelevant variables can lead models to find correlations that don’t exist in the physical world.

- Identify Proxy Signals: If you can’t measure a state directly (e.g., internal engine temperature), determine the related variables that will allow you to derive it reliably.

- Filter at the Source: Quality is better than quantity. High-integrity data makes downstream analysis more computationally efficient and more trustworthy.

2. Balance Sampling Frequency with Physics and Power

How often you capture data should be informed by signal theory – particularly when measuring fast transients, vibration, or rapidly changing dynamics. In these contexts, principles like the Nyquist-Shannon sampling theorem matter: to reconstruct high-frequency behaviour accurately, sampling must exceed the highest signal frequency.

But because many IoT systems measure slower-moving state variables, the real question becomes: what temporal resolution does the physics of your system require?

- Capture Transients: Too sparse, and you miss the spike that signals a looming failure (aliasing). Too frequent, and you overwhelm your power budget, bandwidth, and storage.

- Dynamic Sampling: Consider event-based strategies that capture high-frequency data during anomalies while maintaining a low-power “heartbeat” during steady-state operation.

Your sampling strategy then becomes more of an architectural decision than a data-related one.

3. Ensure Sensor Placement Reflects Physical Reality

The fidelity of a digital twin depends on sensors being placed where the physics actually happen. A temperature sensor 2cm from a heat source versus 10cm away can produce two entirely different models.

- Document the “As-Built” State: Record precise locations, orientations, and mounting methods.

- Manage Sensor Drift: Standardise calibration routines to account for environmental degradation over time. Without a plan for drift, your twin will eventually “hallucinate” problems that aren’t there.

A model can only be as accurate as the assumptions embedded in its measurements.

4. Prioritise Temporal Alignment and Context

A digital twin requires state synchronisation. If Sensor A and Sensor B are not time-aligned, you cannot accurately reconstruct the sequence of events.

- Clock Synchronisation: Use appropriate time protocols (e.g., NTP or PTP) to ensure devices share a consistent temporal reference.

- Semantic Interoperability: Ensure data includes metadata (e.g., units, device states, environmental conditions) so the twin understands why a variable changed, not just that it did.

- Provenance: Track data from sensor to cloud to preserve integrity for audit, compliance, or future model training.

Without temporal and semantic alignment, high-resolution data can still produce low-resolution insights.

5. Instrument the Sensor Network Itself

A digital twin is only as trustworthy as the health of its sensor network. If a sensor degrades silently, the twin will continue modelling confidently, but probably incorrectly too.

- Monitor Network Health: Track packet loss, latency, and connectivity drops.

- Track Sensor Confidence: Battery degradation, calibration history, and environmental stress should inform data quality scoring.

- Detect Drift Early: Build self-diagnostics into the network so degraded inputs don’t quietly distort the model.

In other words: observe the system and observe the observers.

6. Strategise Your Edge and Cloud Partitioning

Decide early which computations happen on the device (the edge) and which happen centrally (the cloud). This is a trade-off between latency, resilience, cost, and analytical depth.

- The Edge: Ideal for millisecond-level anomaly detection, filtering noise, and maintaining local safety logic when connectivity drops.

- The Cloud: Suited for heavy-lift simulations, long-term aggregation, and cross-fleet comparisons that define a mature digital twin.

- The Balance: A well-partitioned architecture ensures that connectivity issues don’t “blind” your local systems.

7. Build for Extensibility and Over-the-Air (OTA) Evolution

Digital twin capabilities often emerge after a product is already deployed, so your hardware should be a living platform, not a static box. However, this can only happen if evolution is part of your roadmap.

- Flexible Firmware: Enable OTA updates to adjust sampling rates, add edge logic, or refine filtering strategies as models mature.

- Extensible Data Schemas: Use schema-aware, adaptable formats that allow new variables to be introduced without breaking downstream pipelines.

- Scalable Protocols: Choose network protocols that can handle higher resolution and increased data flow as you transition from telemetry to simulation.

If your product will never move beyond telemetry or forecasting, these principles can often be simplified. But if evolution toward system-level modelling is even a possibility, they are far easier to design from the outset than to retrofit later.

IoT sensor architecture trade-offs and pitfalls

Designing an IoT sensor network is a pursuit of maximum capability as well as a sequence of deliberate optimisations. Every architectural decision, from sampling rates and compute partitioning to data schemas, enables certain outcomes while constraining others.

The core challenge with designing IoT sensor networks for digital twins is alignment: your technical foundation must match what the product is actually meant to become.

1. Data Fidelity vs Power Consumption

Higher-resolution data enables richer models. But that fidelity is a debt paid in energy, bandwidth, and cloud processing.

Before increasing the sampling rate, evaluate the system’s underlying physics. If you are monitoring slow-moving environmental variables, ultra-high-frequency sampling is often just expensive noise.

However, in systems modelling mechanical fatigue or high-speed transients, missing critical transient windows can materially distort the model. The sampling duty cycle must reflect the asset’s dynamics. While telemetry-only products can often operate on periodic heartbeats, IoT sensor networks for digital twins require precision to justify their storage and power costs.

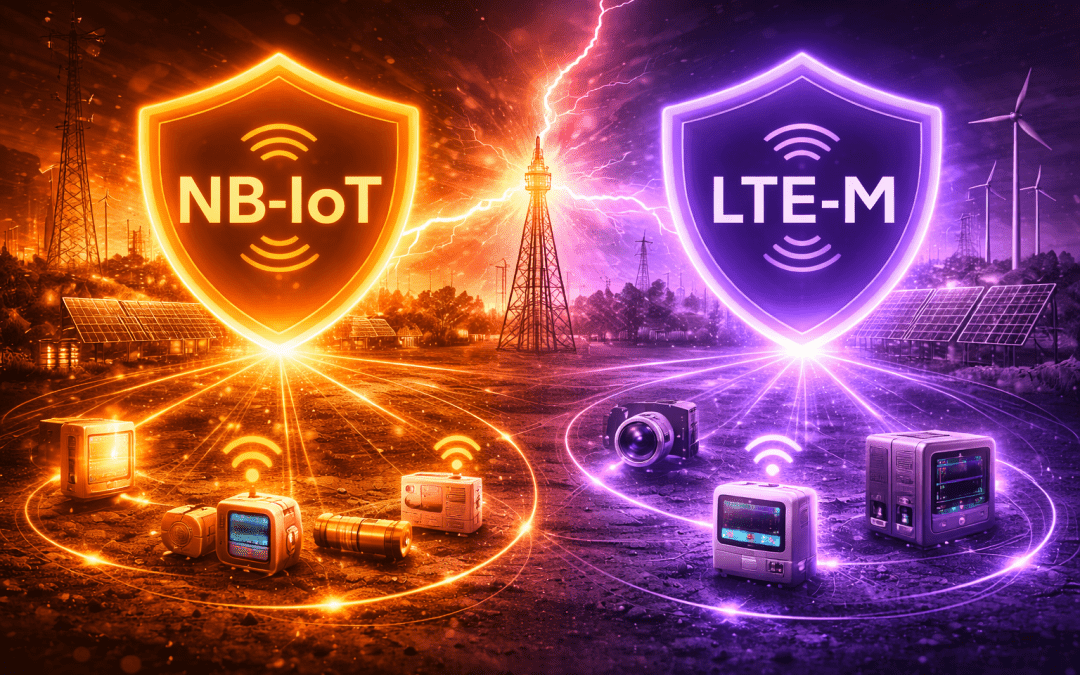

2. Edge Autonomy vs Hybrid Intelligence

The edge-versus-cloud debate is usually framed as a binary choice. In practice, the real question is where the ‘Source of Truth’ lives for each action. In other words, which location (i.e., the decentralised, local edge device or the centralised, remote cloud repository) holds the definitive, most current data and primary authority for decision-making?

Edge processing provides deterministic control loops and resilience during connectivity loss (i.e., instantaneous truth). Cloud infrastructure enables fleet-level aggregation, historical depth, and cross-system predictive analytics (i.e., long-term truth). The trade-off, therefore, is latency versus context.

A device needs sufficient autonomy to operate safely in isolation, but enough connectivity to contribute to broader system intelligence. The partitioning decision defines the failure domain and is thus an architectural rather than a cosmetic one.

3. Flexibility vs Validation Complexity

Extensibility (i.e., modular schemas, configurable firmware, and OTA updates) introduces optionality but also increases architectural debt. Every additional firmware pathway or schema branch expands the validation matrix and security surface area; therefore, testing becomes combinatorial, and certification cycles lengthen.

If your product roadmap includes evolution toward simulation or digital twin capability, this level of flexibility may be justified. However, if the use case is bounded and stable, over-engineering for a hypothetical digital twin could delay your commercial traction and time-to-market without delivering a clear return on your investment.

4. Model Ambition vs Measurement Reality

Digital twins are often presented as mirrors of physical systems. In practice, however, they are constrained by sensor drift, environmental noise, and latent variables that cannot be directly measured. Robust IoT sensor networks for digital twins architecture acknowledge these inferential gaps: inputs carry confidence levels, noise is modelled explicitly, and assumptions are documented and visible.

A visually impressive 3D representation built on statistically filled gaps won’t deliver much operational insight. Therefore, ambition must remain grounded in what the system can genuinely observe; otherwise, the architecture becomes performative rather than diagnostic.

5. Automated Provisioning vs Scaling Friction

Systems that perform well at twenty devices often struggle at two thousand: manual calibration, hard-coded offsets, and fixed metadata appear manageable at the beginning – but at scale, they become structural bottlenecks.

Scalable IoT systems require automated provisioning, standardised schemas, and device self-identification so that analytics layers, including digital twin models, can integrate new hardware without manual intervention.

For products that will never extend beyond telemetry, this level of automation may be unnecessary. But if scale or simulation is part of your roadmap, early shortcuts will define your future limitations.

Final thoughts

The progression from telemetry to predictive analytics to digital twin modelling is less about defining a hierarchy of superiority and more about establishing where you are on the spectrum of intent. For many products, telemetry or predictive insight is the destination, not a stepping stone or a transitional phase. By designing a strong IoT architecture early on, you’ll treat potential trade-offs as strategic decisions and ensure that the system you’re building aligns with the intelligence you actually intend to deliver.

For organisations navigating that progression, the challenge is architectural rather than conceptual. Aligning embedded hardware, connectivity, cloud infrastructure, and modelling capability requires coordinated engineering across disciplines and clearly defining commercial priorities.

At Ignitec, we work with teams to define that alignment early: clarifying whether a product should remain a robust telemetry system, evolve toward predictive analytics, or support full digital twin modelling – and then engineering the sensor architecture accordingly.

We build infrastructure that supports the intelligence you actually intend to deliver.

Environmental forecasting sensors for prediction, not just monitoring

How IT/OT convergence is redefining robotics design

Conservation Tech in Manufacturing: Real Impact on Efficiency

FAQ’s

Why are IoT sensor networks important for digital twins?

IoT sensor networks provide real-time data that digital twins rely on to accurately model physical systems. They enable continuous monitoring of device states, environmental conditions, and performance metrics. Without a well-designed sensor network, a digital twin cannot provide reliable simulations or predictive insights.

How do IoT sensor networks support predictive analytics?

IoT sensor networks collect high-fidelity telemetry from devices and systems, which can be analysed to detect trends and anomalies. This historical and real-time data allows teams to forecast likely outcomes or failures before they occur. In essence, predictive analytics transforms raw sensor data into actionable foresight.

What is the difference between telemetry and a digital twin in IoT?

Telemetry refers to the automated collection and transmission of data from a device for monitoring purposes. A digital twin goes further by modelling the system’s components, interactions, and behaviour in a structured digital form. This allows for simulation, scenario testing, and closed-loop optimisation beyond simple observation.

When should a product use predictive analytics instead of a digital twin?

Predictive analytics is useful when historical trends and probabilistic forecasts provide sufficient insight for decision-making. Products like environmental forecasting sensors can deliver value without modelling the system’s full structure. A digital twin is only necessary when simulating system interactions or testing “what if” scenarios, which adds a meaningful operational advantage.

Which IoT devices are most suited for digital twin integration?

Devices with multiple sensors capturing dynamic or high-resolution data are best suited for digital twin integration. This includes industrial machinery, smart vehicles, or prototypes with complex interactions. The sensor network must support high-fidelity, temporally aligned data to enable accurate modelling.

Who should be involved in designing an IoT sensor network for a digital twin?

Designing a sensor network requires collaboration between hardware engineers, data scientists, and software developers. Each team ensures the network captures meaningful variables, manages data quality, and supports analytics requirements. Early alignment reduces the risk that architectural constraints will block future digital twin capabilities.

Why does sensor placement matter in digital twin modelling?

The accuracy of a digital twin depends on sensors capturing data where the relevant physics occurs. Even small deviations in placement can produce misleading readings or model errors. Correct placement ensures that simulations and predictions reflect the true state of the physical system.

How can sampling frequency affect a digital twin’s accuracy?

The sampling frequency determines how often sensors record data, which affects the resolution of system dynamics. Too sparse sampling can miss critical transients, while overly frequent sampling may waste power and bandwidth. Balancing frequency with the system’s physical behaviour ensures meaningful, actionable data.

What are common pitfalls when scaling IoT sensor networks for digital twins?

Common pitfalls include manual provisioning, inconsistent metadata, and a lack of automated calibration. These issues create bottlenecks as fleets grow from a few devices to thousands. Planning for automated setup, data standardisation, and health monitoring helps maintain the reliability of digital twins at scale.

When is edge processing preferable to cloud processing in IoT sensor networks?

Edge processing is ideal for real-time decisions, anomaly detection, or maintaining local safety logic during connectivity interruptions. Cloud processing excels at historical aggregation, fleet-level analysis, and heavy-lift simulations. The choice depends on latency requirements, resilience needs, and the scope of the desired analytics.

Which data features are most critical for digital twin fidelity?

Critical features are those that represent the system’s “ground truth” and capture meaningful changes in behaviour. Irrelevant or redundant variables can introduce noise and mislead models. Selecting high-integrity, proxy-capable signals ensures the twin accurately reflects the physical asset.

Who manages sensor drift in IoT networks?

Sensor drift is managed by the engineering team through routine calibration, environmental compensation, and long-term monitoring. Drift can gradually erode the accuracy of both telemetry and digital twin models. Without active management, predictions and simulations become unreliable.

Why is temporal alignment important in IoT sensor networks?

Temporal alignment ensures that all sensor readings are synchronised to a common time reference. Misaligned data can distort event sequences and lead to incorrect modelling. Accurate timing is essential for reconstructing system behaviour and testing “what if” scenarios.

How do IoT sensor networks enable “what if” scenario testing?

By continuously feeding a digital twin with real-time data, IoT sensor networks allow simulations of alternative actions or conditions. Teams can test hypothetical changes without affecting the physical system. This enables optimisation and risk-free experimentation.

What role does data provenance play in digital twin networks?

Data provenance tracks the origin, path, and transformations of each data point. It ensures that insights, simulations, and predictions are based on verifiable, trustworthy data. Provenance is particularly important for audit, compliance, and refining models over time.

When should a company consider building a twin-ready sensor network?

Companies should consider it if the product may evolve to require system-level modelling, closed-loop optimisation, or fleet-level predictive insights. Early decisions around sensor placement, sampling, and schema flexibility determine future feasibility. Retrofitting a digital twin later is often complex and costly.

Which IoT sensor network features support fleet-wide analysis?

Features include standardised data schemas, automatic device identification, and centralised aggregation capabilities. High-quality metadata ensures comparability across devices and locations. These enable cross-fleet simulations and digital twin analyses at scale.

Why is extensibility important for IoT sensor networks in digital twin applications?

Extensibility allows new sensors, variables, or firmware logic to be added without disrupting existing systems. It supports product evolution toward more sophisticated digital twins. Flexible design reduces architectural debt and avoids limiting future intelligence.

How can event-based sampling improve efficiency in digital twin sensor networks?

Event-based sampling captures high-frequency data only when anomalies or changes occur. This reduces power consumption, storage, and bandwidth during steady-state conditions. It ensures critical moments are recorded without unnecessary resource use.

What is the difference between a predictive digital model and a full digital twin?

A predictive model analyses historical and real-time data to forecast likely outcomes. A full digital twin continuously simulates the system’s structure, interactions, and dynamics. Digital twins allow scenario testing and closed-loop optimisation, going beyond trend prediction alone.

Get a quote now

Ready to discuss your challenge and find out how we can help? Our rapid, all-in-one solution is here to help with all of your electronic design, software and mechanical design challenges. Get in touch with us now for a free quotation.

Comments

Get the print version

Download a PDF version of our article for easier offline reading and sharing with coworkers.

0 Comments